DNG in HCI International 2021 / オンライン開催されるHCI International 2021にて研究発表を行います

2021. 07. 19

We will present our work at the 23rd international conference on HCI International 2021 !

デジタルネイチャー研究室は, オンライン開催されるHCI International 2021にて7件の口頭発表と4件のポスター発表を行います.

Official Web : http://2021.hci.international/

会期:2021/07/24〜2021/07/29 (VIRTUAL)

発表プロジェクト

Parallel sessions with paper presentations

1. Discussion of Intelligent Electric Wheelchairs for Caregivers and Care Recipients

In order to reduce the burden on caregivers, we developed an intelligent electric wheelchair. We held workshops with caregivers, asked them regarding the problems in caregiving, and developed problem-solving methods. In the workshop, caregivers’ physical fitness and psychology of the older adults were found to be problems and a solution was proposed. We implemented a cooperative operation function for multiple electric wheelchairs based on the workshop and demonstrated it at a nursing home. By listening to older adults, we obtained feedback on the automatic driving electric wheelchair. From the results of this study, we discovered the issues and solutions to be applied to the intelligent electric wheelchair.

Project page: https://digitalnature.slis.tsukuba.ac.jp/2017/03/telewheelchair/

Authors: Satoshi Hashizume, Ippei Suzuki, Kazuki Takazawa, Yoichi Ochiai

Presentation details: Parallel sessions with paper presentations

2021/07/25, SUN

21:00 – 23:00 (JST – Tokyo)

HCI in Mobility, Transport and Automotive Systems

S049: HCI Issues and Assistive Systems for Users with Special Needs in Mobility

詳細につきましてはこちらのページをご確認ください.

2. A Case Study of Augmented Physical Interface by Foot Access with 3D Printed Attachment

We propose an attachment creation framework that allows foot access to existing physical interfaces designed to use hands such as doorknobs. The levers, knobs, and switches of furniture and electronic devices are designed for the human hand. These interfaces may not be accessible for hygienic and physical reasons. Due to the high cost of parts and initial installation, sensing or automation is not preferable. Therefore, there is a need for a low-cost way to access physical interfaces without hands. We have enabled foot access by extending the hand-accessible interface with 3D-printed attachments. Finally, we proposed a mechanism (component set) that transmits movement from a foot-accessed pedal to an interface with attachments. And we attached it to the doorknob, water faucet, and lighting switch interface. A case study was conducted to verify the system’s effectiveness, which consisted of 3D-printed attachments and pedals.

Project page: https://digitalnature.slis.tsukuba.ac.jp/2020/05/opening-a-door-without-hand/

Publication: https://digitalnature.slis.tsukuba.ac.jp/2021/07/augmented-physical-interface-hcii2021/

Authors: Tatsuya Minagawa, Yoichi Ochiai

Presentation details: Parallel sessions with paper presentations

2021/07/25, SUN

23:30 – 25:30 (JST – Tokyo)

Design, User Experience, and Usability.

S073: UX Aspects in Product Design

詳細につきましてはこちらのページをご確認ください.

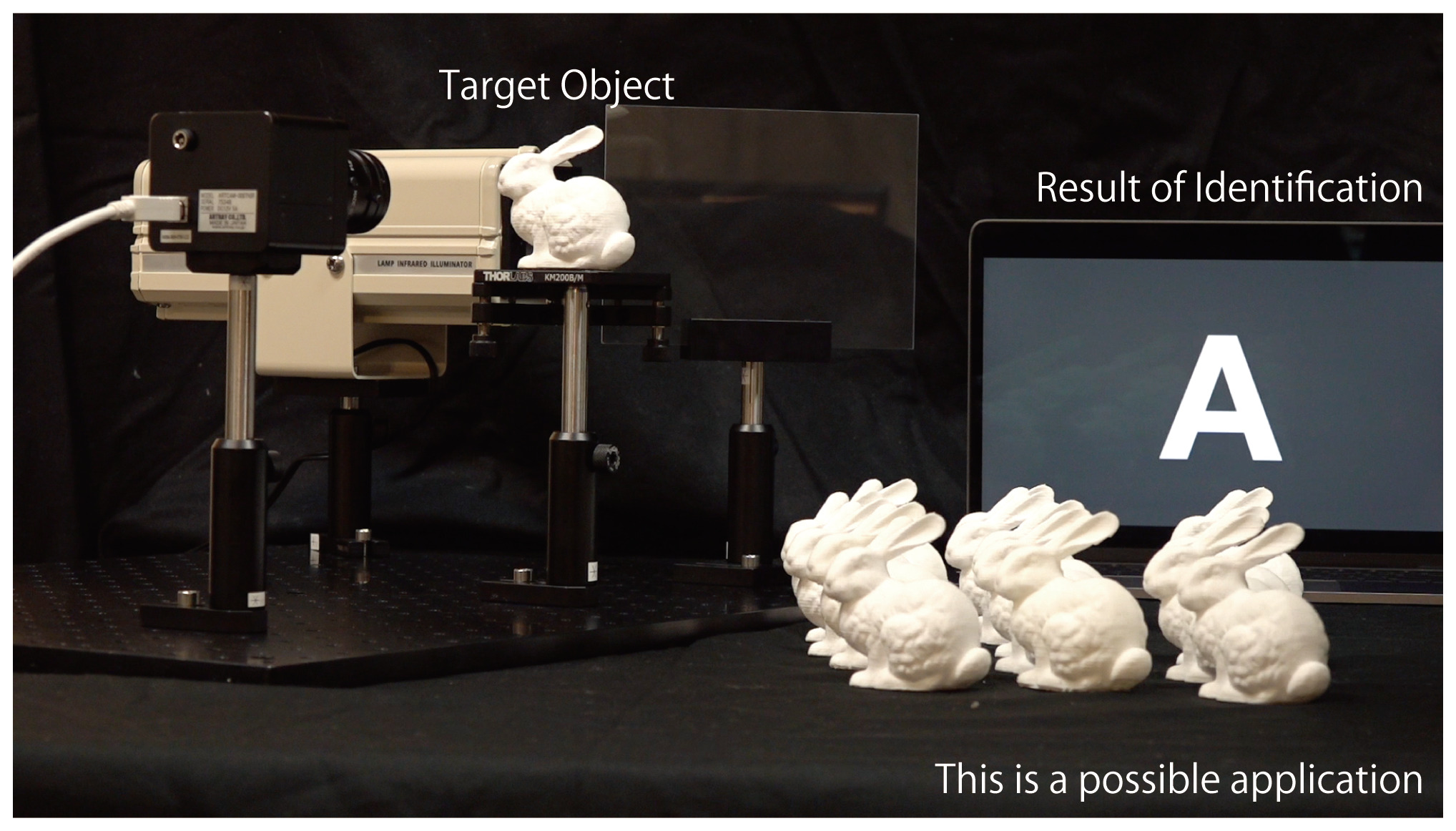

3. A Preliminary Study for Identification of Additive Manufactured Objects with Transmitted Images

Additive manufacturing has the potential to become a standard method for manufacturing products, and product information is indispensable for the item distribution system. While most products are given barcodes to the exterior surfaces, research on embedding barcodes inside products is underway. This is because additive manufacturing makes it possible to carry out manufacturing and information adding at the same time, and embedding information inside does not impair the exterior appearance of the product. However, products that have not been embedded information can not be identified, and embedded information can not be rewritten later. In this study, we have developed a product identification system that does not require embedding barcodes inside. This system uses a transmission image of the product which contains information of each product such as different inner support structures and manufacturing errors. We have shown through experiments that if datasets of transmission images are available, objects can be identified with an accuracy of over 90%. This result suggests that our approach can be useful for identifying objects without embedded information.

Authors: Kenta Yamamoto, Ryota Kawamura, Kazuki Takazawa, Hiroyuki Osone, Yoichi Ochiai

Presentation details: Parallel sessions with paper presentations

2021/07/26, MON

23:30 – 25:30 (JST – Tokyo)

Artificial Intelligence in HCI

S138: Signal-Based AI for HCI

詳細につきましてはこちらのページをご確認ください.

4. Blind-Badminton: A Working Prototype to Recognize Position of Flying Object for Visually Impaired Users

This paper proposes a system for recognizing flying objects during a ball game in blind sports. “Blind sports” is a term that refers to a sport for visually impaired people. There are various types of blind sports, and several of these sports, such as goalball and blind soccer, are registered in the Paralympic Games. This study specifically aimed at realizing games similar to badminton. Various user experiments were conducted to verify the requirements for playing sports that are similar to badminton without visual stimulus. Additionally, we developed a system that provides users with auxiliary information, including height, depth, left and right directions, and swing delay by adopting sound feedback via a binaural sound source, as well as haptic feedback via a handheld device.This study evaluated several conditions including that of a balloon owing to its slow falling speed and adopted a UAV drone that generates flight sounds on its own and adjusts its speed and trajectory during the course of the games. To evaluate playability, this study focused on three points: a questionnaire following the experiment, the error in the drone’s traveling direction, and the racket’s swing direction. From the play results and answers of the questionnaire, it was determined that the users were able to recognize the right and left directions, as well as the depth of the drone using the noise generated by the drone, and that this approach is playable in these situations.

Project page: https://digitalnature.slis.tsukuba.ac.jp/2021/07/blind-badminton/

Publication: https://digitalnature.slis.tsukuba.ac.jp/2021/07/blind-badminton-hcii2021/

Authors: Masaaki Sadasue, Daichi Tagami, Sayan Sarcar, Yoichi Ochiai

Presentation details: Parallel sessions with paper presentations

2021/07/26, MON

23:30 – 25:30 (JST – Tokyo)

Universal Access in Human-Computer Interaction.

S116: Advanced Accessibility Technologies

詳細につきましてはこちらのページをご確認ください.

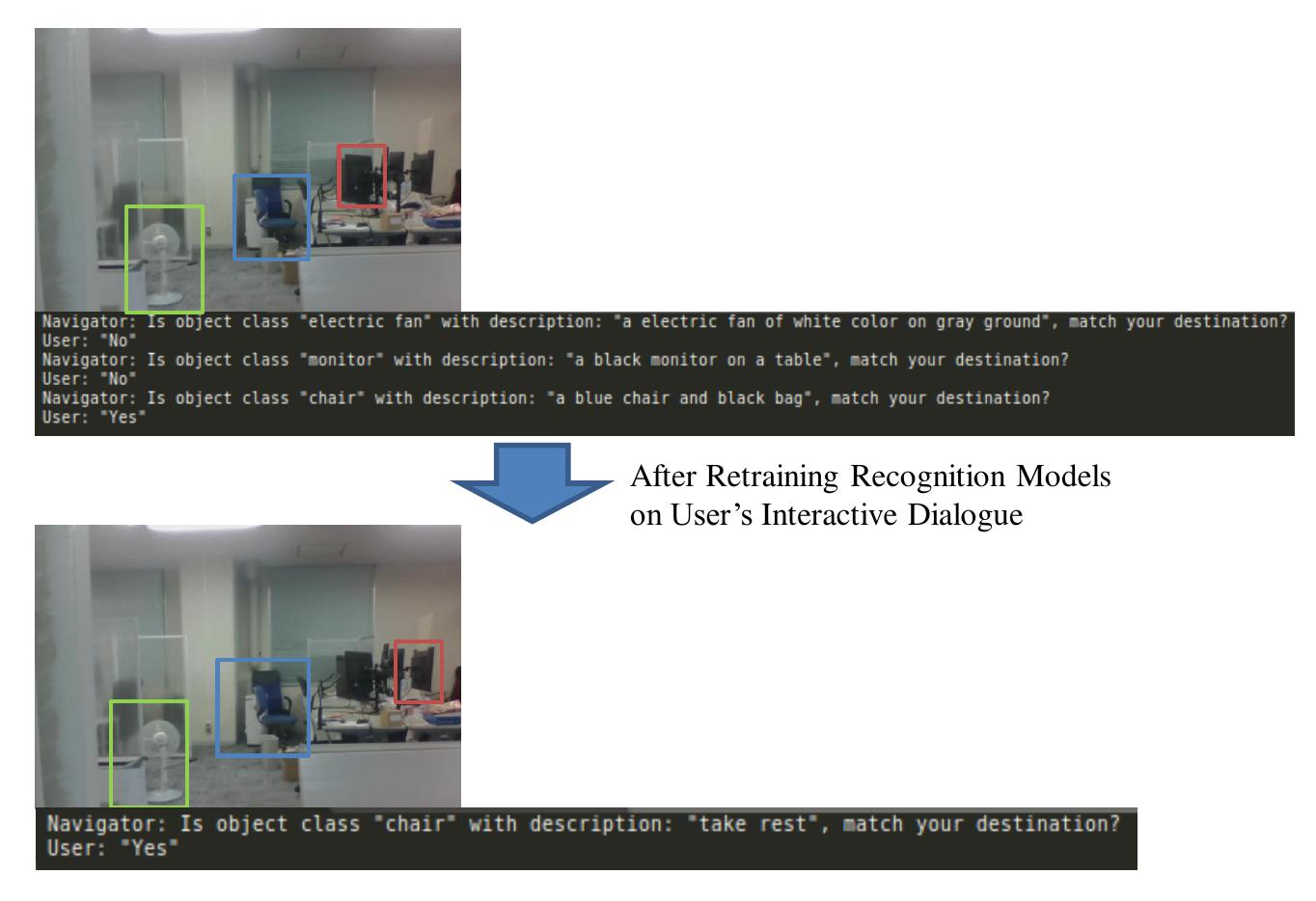

5. Personalized Navigation that Links Speaker’s Ambiguous Descriptions to Indoor Objects for Low Vision People

Indoor navigation systems guide a user to his/her specified destination. However, current navigation systems face the challenges when a user provides ambiguous descriptions about the destinations. This can commonly happen to visually impaired people or those who are unfamiliar with new environments. For example, in an office, a low-vision person asks the navigator by saying “Take me to where I can take a rest?”. The navigator may recognize each object (e.g., desk) in the office but may not recognize which location the user can take a rest. To overcome the gap of surrounding understanding between low-vision people and a navigator, we propose a personalized interactive navigation system that links user’s ambiguous descriptions to indoor objects. We build a navigation system that automatically detect and describe objects in the environment by neural-network models. Further, we personalize the navigation by re-training the recognition models based on previous interactive dialogues, which may contain the corresponding between user’s understanding and the visual images or shapes of objects. In addition, we utilize a GPU cloud for supporting computational cost and smooth the navigation by locating user’s position using Visual SLAM. We discussed further research on customizable navigation with multi-aspect perceptions of disabilities and the limitation of AI-assisted recognition.

Project page: https://digitalnature.slis.tsukuba.ac.jp/2021/07/personalized-navigation

Publication: https://digitalnature.slis.tsukuba.ac.jp/2021/07/personalized-navigation-hcii2021/

Authors: Jun-Li Lu, Hiroyuki Osone, Akihisa Shitara, Ryo Iijima, Bektur Ryskeldiev, Sayan Sarcar, Yoichi Ochiai

Presentation details: Parallel sessions with paper presentations

2021/07/26, MON

23:30 – 25:30 (JST – Tokyo)

Universal Access in Human-Computer Interaction

S116: Advanced Accessibility Technologies

詳細につきましてはこちらのページをご確認ください.

6. EmojiCam: Emoji-assisted video communication system leveraging facial expressions

This study proposes the design of a communication tech-nique that uses graphical icons, including emojis, as an alternative to fa-cial expressions in video calls. Using graphical icons instead of complexand hard-to-read video expressions simplifies and reduces the amountof information in a video conference. The aim was to facilitate commu-nication by preventing quick and incorrect emotional delivery. In thisstudy, we developed EmojiCam, a system that encodes the emotions ofthe sender with facial expression recognition or user input and presentsgraphical icons of the encoded emotions to the receiver. User studies andexisting emoji cultures were applied to examine the communication flowand discuss the possibility of using emoji in video calls. Finally, we dis-cuss the new value that this model will bring and how it will change thestyle of video calling.

Project page:

Publication: https://digitalnature.slis.tsukuba.ac.jp/2021/07/emojicam-hcii2021/

Authors: Kosaku Namikawa, Ippei Suzuki, Ryo Iijima, Sayan Sarcar, Yoichi Ochiai

Presentation details: Parallel sessions with paper presentations

2021/07/27, TUE

21:00 – 23:00 (JST – Tokyo)

Human-Computer Interaction

S141: Social Interaction

詳細につきましてはこちらのページをご確認ください.

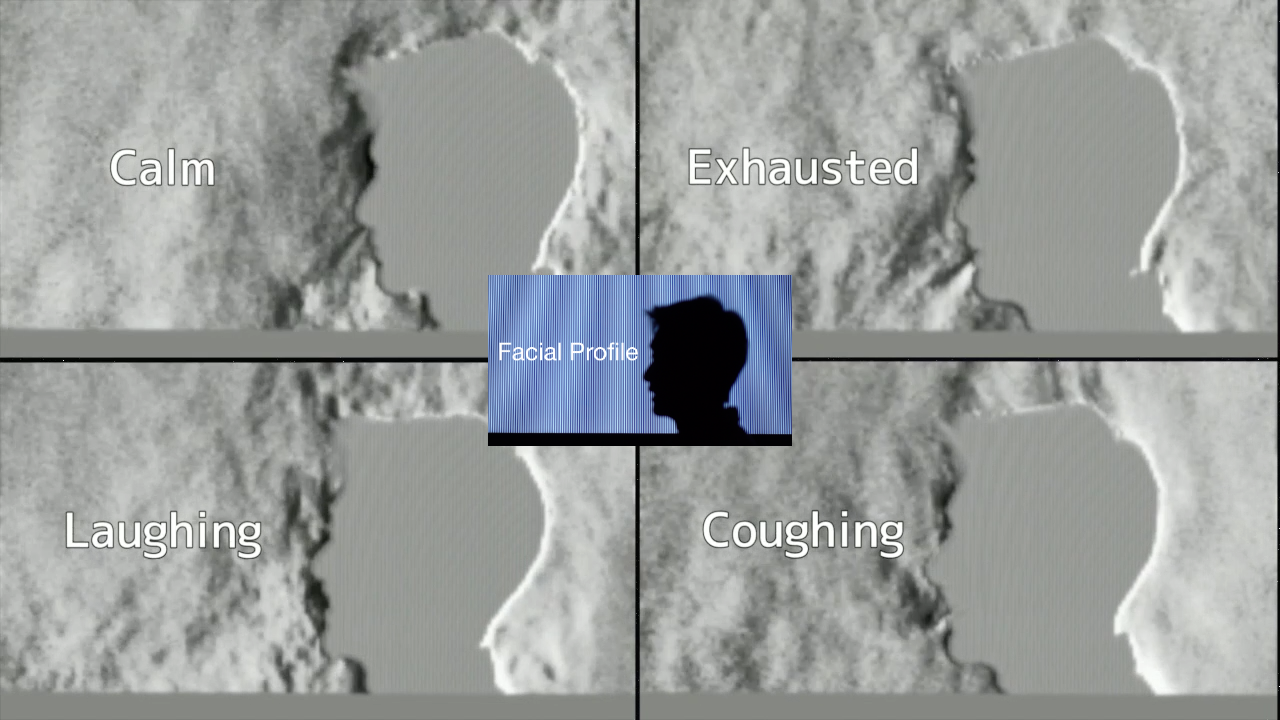

7. Interaction with Objects and Humans based on Visualized Flow using a Background-oriented Schlieren Method

Air flow is a ubiquitous phenomenon that can provide important insights to extend our perceptions and intuitive interactions with our surroundings. This study aimed to explore interaction methods based on flow visualization using background-oriented schlieren (BOS) in case studies. Case 1 involved visualization of the airflow around humans or objects, which demonstrated that visualized flow provides meaningful information about the human or object under investigation. Case 2 involved the testing of a prototype sensor, where visualized flow was used to sense fine airflow. Stabilization of the flow was required for operation of the sensor. Overall, interaction using flow visualization allows for the perception of air flow in a broad sense, and presents new opportunities in the field of human computer interaction.

Project page: https://digitalnature.slis.tsukuba.ac.jp/2021/03/interaction-based-on-bos-method/

Publication: https://digitalnature.slis.tsukuba.ac.jp/2021/07/interaction-based-on-bos-method-hcii2021/

Authors: Shieru Suzuki, Shun Sasaguri, Yoichi Ochiai

Presentation details: Parallel sessions with paper presentations

WED, 28 JULY

23:30 – 25:30 (JST – Tokyo)

Human-Computer Interaction

S239: Input Methods and Techniques – II

詳細につきましてはこちらのページをご確認ください.

POSTER PRESENTATION: 2021/07/24, SAT ~ 2021/07/29 THU (All Day)

ポスタープレゼンテーションの詳細につきましてはこちらのページをご確認ください.

http://2021.hci.international/poster-presentations.html

1. A Customized VR Rendering with Neural-Network Generated Frames for Reducing VR Dizziness

This research is to develop a NN system that generates smooth frames for VR experiences, in order to reduce the hardware requirement for VR therapy. VR is introduced as a therapy to many symptoms, such as Acrophobia, for a long, but not yet broadly used. One of the main reason is that a high and stable frame rate is a must for a comfortable VR experience. Low fresh rate in VR could cause some adverse reactions, such as disorientation or nausea. These adverse reactions are known as “VR dizziness”. We plan to develop a NN system to compress the frame data and automatically generate consecutive frames with extra information in real-time computation. Provided with the extra information, the goal of compressing NN is to conclude and abandon textures that are dispensable. Thereafter, it would compress left and right frames in VR into a data frame with all necessary information. Subsequently, we send it to clients, the client put the data frame into rebuilding GAN system and get three pairs of frames. After applying some CV methods such as filtering and alias, these frames are shown on users’ HMD. Furthermore, the compressed data can be transported easily, which allows medical facilities to build a VR computing center for multiple clients, making VR more broadly available for medical usages.

Project page:

Publication: https://digitalnature.slis.tsukuba.ac.jp/2021/07/rendering-for-reducing-vr-dizziness-hcii2021/

Authors: Zhexin Zhang, Jun-Li Lu, Yoichi Ochiai

2. Choreography Composed by Deep Learning

Choreography is a type of art in which movement is designed. Because it involves many complex movements, a lot of time is spent creating the choreography. In this study, we constructed a dataset of dance movements with more than 700,000 frames using dance videos of the “Odotte-mita” genre. Our dataset could have been very useful for recent research in motion generation, as there has been a lack of dance datasets. Furthermore, we verified the choreography of the dance generated by the deep learning method (acRNN) by physically repeating the dance steps. To the best of our knowledge, this is the first time such a verification has been attempted. It became clear that the choreography generated by machine learning had unique movements and rhythms for dancers. In addition, dancing machine-learning-generated choreography provided an opportunity to make new discoveries. In addition, the fact that the model was a stick figure made the choreography vague, and, although it was difficult to remember, it created varied dance steps.

Project page:

Publication: https://digitalnature.slis.tsukuba.ac.jp/2021/07/choreography-composed-by-deep-learning-2/

Authors: Ryosuke Suzuki, Yoichi Ochiai

3. Development of a Telepresence System Using a Robotic Embodiment Controlled by Mobile Devices

This study proposes a telepresence system controlled by mobile devices consisting of a conference side and a remote side. The conference side of this system consists of a puppet-type robot that enhances the co-telepresence. The remote side includes a web application that can be accessed by a mobile device that can operate the robot by using a motion sensor. The effectiveness of the robot-based telepresence techniques in the teleconference applications is analyzed by conducting user surveys of the participants in remote and real-world situations. It is observed from the experimental results that the proposed telepresence system enhances the coexistence of remote participants and allows them to attend the conference enjoyably.

Project page:

Publication: https://digitalnature.slis.tsukuba.ac.jp/2021/07/tablet-telepresence-hcii2021/

Authors: Tatsuya Minagawa, Ippei Suzuki, Yoichi Ochiai

4. Printed Absorbent: Inner Fluid Design with 3D Printed Object

Printed Absorbent is a novel concept and approach to interactive material utilizing fluidic channels. In this study, we created 3D-printed objects with fluidic mechanisms that can absorb fluids to allow for various new applications. First, we demonstrated that capillary action, based on the theoretical formula, could be produced with 3D-printed objects under various conditions using fluids with different physical properties and different sizes of flow paths. Second, we verified this phenomenon using real and simulated experiments for seven defined flow channels. Finally, we described our proposed interaction methods, the limitations in the design of fluidic structures, and their potential applications.

Project page: https://digitalnature.slis.tsukuba.ac.jp/2017/09/printed-absorbent/

Publication: https://digitalnature.slis.tsukuba.ac.jp/2021/07/printed-absorbent-hcii2021/

Authors: Kohei Ogawa, Tatsuya Minagwa, Hiroki Hasada, Yoichi Ochiai

研究室概要

名称 : デジタルネイチャー研究室

代表者 : 准教授 落合 陽一

所在地 : 茨城県つくば市春日1-2

研究内容 : 波動工学、デジタルファブリケーション、人工知能技術を用いた空間研究開発

URL : https://digitalnature.slis.tsukuba.ac.jp/

代表略歴

落合陽一 Yoichi Ochiai

1987生,2015年東京大学学際情報学府博士課程修了(学際情報学府初の短縮終了),博士(学際情報学).日本学術振興会特別研究員DC1,米国Microsoft ResearchでのResearch Internなどを経て,2015年より筑波大学図書館情報メディア系助教 デジタルネイチャー研究室主宰.2015年,Pixie Dust Technologies.incを起業しCEOとして勤務.2017年から2019年まで筑波大学学長補佐,2017年から大阪芸術大学客員教授,2020年デジタルハリウッド大学特任教授,金沢美術工芸大学客員教授,2021年4月から京都市立芸術大学客員教授を兼務.2017年12月より,ピクシーダストテクノロジーズ株式会社による筑波大学デジタルネイチャー推進戦略研究基盤代表及び准教授を兼務.2020年6月デジタルネイチャー開発研究センター・センター長就任.専門はCG,HCI,VR,視・聴・触覚提示法,デジタルファブリケーション,自動運転や身体制御.

本件に関するお問い合わせ先

名称 : 筑波大学デジタルネイチャー研究室

Email : contact<-at->digitalnature.slis.tsukuba.ac.jp <-at->を@に置き換えてください

New

-

DNG in CHI / スペイン バルセロナにて開催されるCHI 2026 にて研究発表を行います

26. 04. 11

-

AWARD|令和8年度 科学技術分野の文部科学大臣表彰 科学技術賞(開発部門)を受賞しました

26. 04. 08

-

2026年度 新規ARE生募集

26. 04. 06

-

DNG in AHs / 沖縄にて開催されるAHs 2026 にて研究発表を行います

26. 03. 06

-

DNG in SIGGRAPH Asia /香港で開催されるSIGGRAPH Asia 2025 にてトークセッションを行います

25. 12. 11