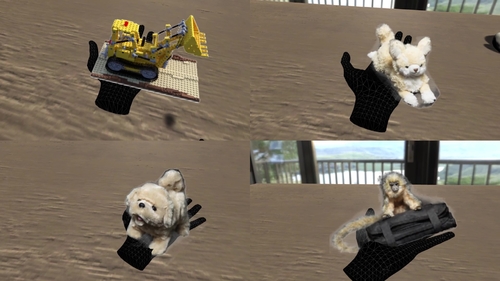

An aspirational goal for virtual reality (VR) is to bring in a rich diversity of real world objects losslessly. Existing VR applications often convert real world objects into explicit 3D models with meshes, which allows fast interactive rendering but severely limits its quality and the types of supported objects, fundamentally upper-bounding the “realism” of VR experience. Inspired by the classic “billboards” technique in gaming as well as implicit neural rendering models and image-based “world” models, we present Deep Billboards that model 3D objects implicitly using neural networks, where only 2D image is rendered at a time based on the user’s viewing direction. Our system, connecting a commercial VR headset with a server running neural rendering, allows real-time high-resolution simulation of detailed rigid objects, hairy objects, actuated dynamic objects, and more, in an interactive VR world, drastically narrowing the existing real-to-simulation (real2sim) gap while preserving smooth interactivity. Additionally, we augment Deep Billboards with physical interaction capability, adapting classic billboards from screen-based games to immersive VR. To the best of our knowledge, we are the first to implement, evaluate, and open-source a functional system of high-quality implicit neural rendering in interactive VR applications, delivering the rich real world more losslessly into the Metaverse and expanding the impact of neural rendering models to interactive simulation such as VR and gaming beyond static 3D reconstruction.

Authors: Naruya Kondo*, So Kuroki**, Ryosuke Hyakuta*, Yutaka Matsuo**, Shixiang Shane Gu** ***, Yoichi Ochiai*

*University of Tsukuba, **The University of Tokyo, ***Google Brain