Abstract:

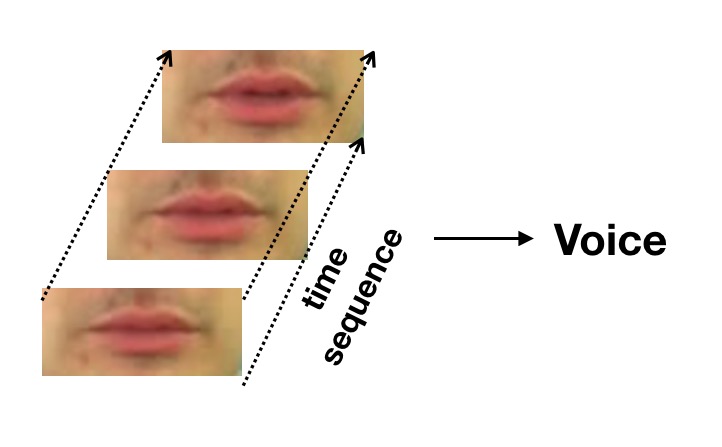

In this paper, we designed a system called LipSpeaker to help acquired voice disorder people to communicate in daily life. Acquired voice disorder users only need to face the camera on their smartphones, and then use their lips to imitate the pronunciation of the words. LipSpeaker can recognize the movements of the lips and convert them to texts, and then it generates audio to play. Compared to texts, mel-spectrogram is more emotionally informative. In order to generate smoother and more emotional audio, we also use the method of predicting mel-spectrogram instead of texts through recognizing users’ lip movements and expression together.

本論文では,後天性の音声障害者が日常生活でコミュニケーションをとることを支援する,LipSpeakerというシステムを設計しました.後天性の音声障害ユーザーは,スマートフォンでカメラに向き,唇を使って単語の発音を模倣するだけです.LipSpeakerは唇の動きを認識してテキストに変換し,音声を生成して再生します.テキストと比較すると,メル‐スペクトログラムはより感情的な情報になります.より滑らかで感情的な音声を生成するために,ユーザーの唇の動きと表情を一緒に認識して,テキストではなくメルスペクトログラムを予測する方法も使用します.

Yaohao Chen, Junjian Zhang, Yizhi Zhang, Yoichi Ochiai

HONORS

“LipSpeaker” won Best Poster Award of the HCII 2019 Conference

Yaohao Chen, Junjian Zhang, Yizhi Zhang, Yoichi Ochiai : LipSpeaker: Helping Acquired Voice Disorders People Speak Again, Best Poster Award, in the context of HCI International 2019, 26-31 July 2019, Orlando, FL, USA.